Chapter Five: Digital Audio

1. Digital Audio Overview

The principles which underlie almost all digital audio applications and devices, be they digital synthesis, sampling, digital recording, or CD or cell phone playback, are based on the basic concepts presented in this chapter. New forms of playback, file formats, compression, and storage of data are all changing on a seemingly daily basis, but the underlying mechanisms for converting real-world sound into digital values, manipulating those data, and finally converting them back into real-world sound, has not varied much since Max Mathews developed MUSIC I in 1957 at Bell Labs.

The theoretical framework that enabled musical pioneers such as Max Mathews to develop digital audio programs stretches back over a century. For the brevity of this introduction, a mention of the groundbreaking theoretical work done at Bell Labs in the mid-20th century is worth noting. Bell Labs was concerned with transmitting larger amounts of voice data over existing telephone lines than could normally be sent with analog transmissions, due to bandwidth restrictions. Many of the developments pertaining to computer music were directly related to work on this topic.

Section 4 will explore the implications of the Nyquist Theorem on the limitations of digital audio. First presented by Harry Nyquist, a Swedish-born physicist, in Certain Topics in Telegraph Transmission Theory (Trans. AIEE, vol. 47, pp. 617-644, Apr. 1928), Nyquist laid out the principles for sampling analog signals at even intervals of time and at more than twice the rate of the highest frequency needed so they could be transmitted over telephone lines as "digital" signals, even though the technology to do so did not exist at the time. Part of this work is now known as the Nyquist Theorem. Nyquist worked for AT&T, then Bell Labs. Twenty years later, Claude Shannon, mathematician and early computer scientist, also working at Bells Labs and then M.I.T., developed a proof for the Nyquist theory (thereby making it a theorem).* The importance of their work to information theory, computing, networks and digital audio cannot be understated. For example, the same data stream theory used in high-speed networking is the same technology previously used in higher-end Digidesign Pro Tools systems (TDM or time division multiplexing). Pulse Code Modulation (PCM), a widely used method for encoding and decoding binary data, such as that used in digital audio, was also developed early on at Bell Labs, attributed to John R. Pierce, a brilliant engineer in many fields, including establishment of foundational computer music concepts.

Competing claims for the title of the world's first computer music program exist. Not to offend our good friends down under in Oz, a mention of Geoff Hill, who programmed the CSIR MkI developed in Sydney (credited as being among the first stored program digital computers, later known as the CSIRAC) to play the Colonel Bogey March in 1951 is in order, as this seems to be the first instance of programmed computer-generated music this author can find, primitive as it may have been. For a history of the musical contributions of CSIRAC, click here.

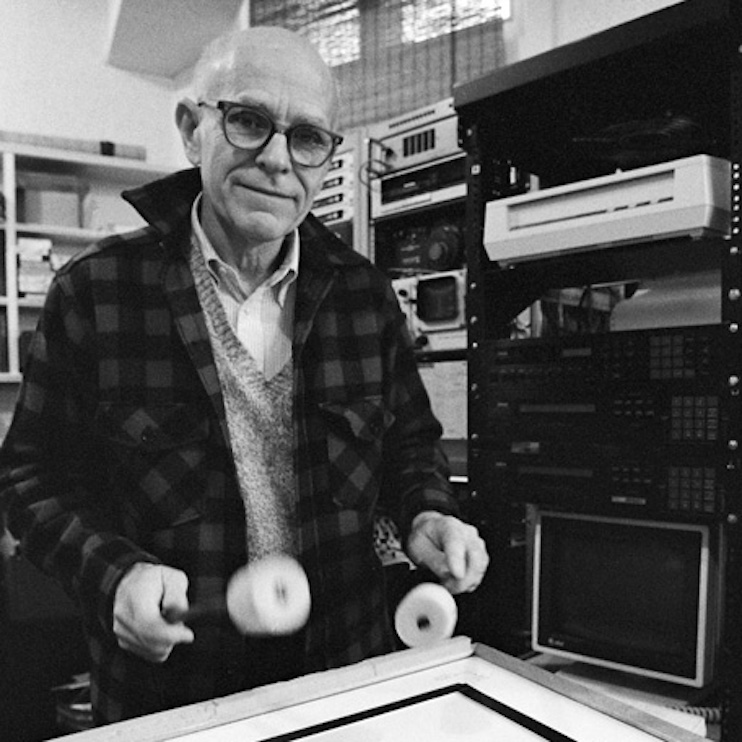

Max Mathews

Considered by many to be "the father of computer music," Max Mathews (1926-2011) was well-known for his pioneering work in direct digital synthesis, culminating in the MUSIC series of languages such as Music V, where sound-making instructions were typed in on punch cards or a computer terminal at first. Max worked for Bell Labs, IRCAM and eventually Stanford University. He is credited with development with the GROOVE system for digital to analog synthesis control and his Radio Drum performance interface (pictured above). Before his death, he could often be found joyously mingling at computer music conferences around the world.

Harry Nyquist

Harry Nyquist (1889-1976) was a Swedish-born physicist and electrical engineer, receiving a Ph.D. from Yale, who pioneered developments communication theory at Bell Labs. The Nyquist-Shannon Sampling Theorem embodies principles first applied by Nyquist to telegraphy in the 1920's with Certain Topics in Telegraph Transmission Theory (1928) which Shannon later applied to information theory. The Nyquist Theorem now governs bandwidth considerations for all modern digital audio applications. The Nyquist frequency represents half the sampling rate of a digital system, below which a signal can accurately be reproduced.

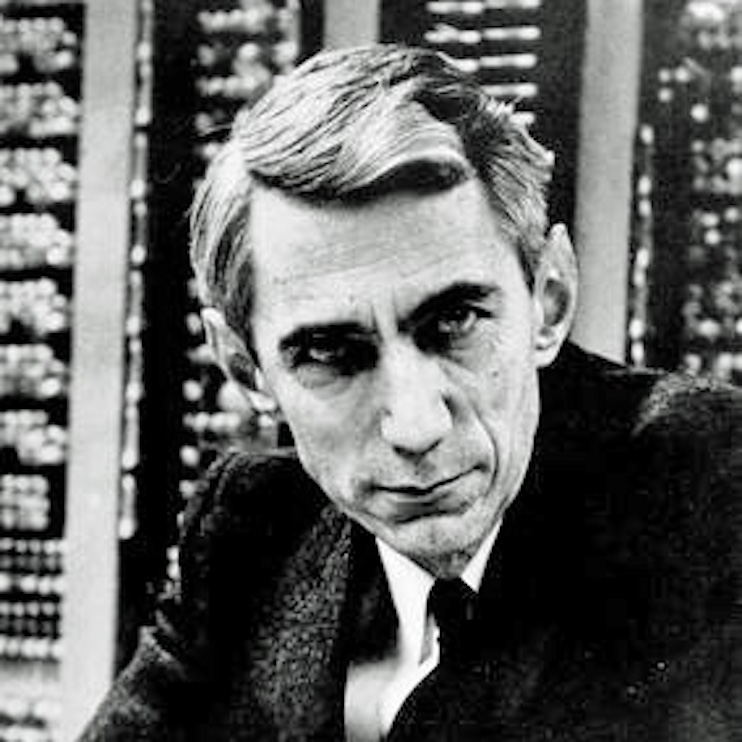

Claude Shannon

Claude Shannon (1916-2001) is considered the "father" of modern day information theory. A mathematician and electrical engineer who designed the first digital logic circuits, Shannon built upon work done by Harry Nyquist and published A Mathematical Theory of Communication which opened the door for developments in, amongst many other things, digital signal processing. Shannon applied his brilliance to many eclectic pursuits, including juggling, early computer design and unicycles. He was also a major contributor to the field of cryptography and secure communication during WWII and beyond.